Use Test Sets to Evaluate 3Chat Agent Before Go-Live

Feature Overview

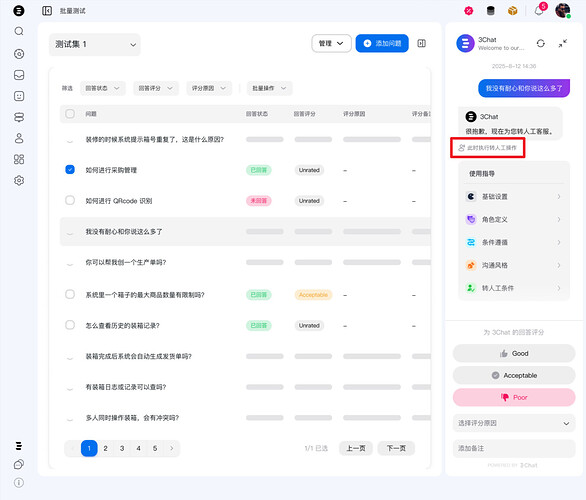

3Chat’s Test Set feature helps you batch-create, import, and evaluate test cases to quickly check how your agent performs across different scenarios. You can create test sets by adding questions or uploading files, then run tests to generate reports, and further score and analyze the answers. This feature improves the efficiency of quality management and optimization for your agent.

Quick Operation Guide

Enter the Feature Page

-

Log in to the 3Chat Agent Management Console.

-

In the left navigation bar, go to 3Chat Agent → Testing → Test Sets to open the Test Sets page.

Create a New Test Set

When using Test Sets for the first time, you’ll land on an initialization page where you can start adding test sets and test questions. You can add a test set in two ways:

-

Click “Add Directly” to manually input questions

-

Or import via file upload (supports csv and xlsx)

Use Add Directly: Manually enter questions

-

Click the “Add Directly” card to open the add modal. The default name is “Test Set 1”, and you can modify it.

-

Enter the questions you want in the test set, and click “Add Question” to input multiple items. You can edit or delete your questions at any time.

-

You can also copy-paste questions in bulk from Word or Excel if they are line-separated. You can add up to 50 questions at a time. If more than 50 are provided, the system will keep only the first 50.

-

After entering the questions, click “Save” to create the test set.

Use Upload File: Bulk import questions

-

Click the “Upload File” card to open the add modal. The default name is “Test Set 1”, and you can modify it.

-

Click or drag a file to upload. Supported formats: .xls, .xlsx, .xlsm, .csv. Make sure your questions are in the first column. We will read the first 50 non-empty rows from the first column and add them as test questions.

-

You can click “Clear File” to reselect a file. Only one file can be uploaded at a time. After the upload succeeds, click “Upload” to create the test set.

Run Tests and Generate

After a test set is created, the system will automatically run the added questions and batch-request single-turn replies from the 3Chat Agent.

View Answer Details

- On the Test Sets page, you can see a list of running test sets including Question, Answer Status, Score, Reason for Score. Click a test item to view details such as your test question, the Agent’s specific answer, and the guidance used for that answer. Answer status includes “Answered” and “Not Answered”, determined by the system. You provide the answer score and reason for score.

- Answered: The Agent provided a valid response to the question.

- Not Answered: The Agent failed to provide a valid response.

- Your test questions may involve different scenarios and might trigger handoff to human or AI Tasks. In such cases, you will see a small note below the answer indicating that the question can trigger handoff/execute tasks in production, but no actual operations will be performed within the Test Set environment.

Update Answers

-

Bulk actions: Select one or multiple test items to bulk Update Answers or Delete Questions. Completed test items can be edited; incomplete ones are view-only.

-

If you’re not satisfied with the Agent’s answer, click Refresh at the top of the detail panel to attempt an updated answer.

Score the Answers

-

Choose an answer rating in the detail panel: Good, Acceptable, or Poor to evaluate the Agent’s answer quality. You can also add an optional note.

-

If you choose Good or Acceptable, no further reason is required. If you choose “Poor”, click “Select Reason” to pick a reason for the poor rating and guide further improvements.

-

Available reasons include: Relevant content not used, Wrong content usage, Inappropriate tone, Answer too long/too short, Did not reflect role identity, Did not follow communication style, Did not follow handoff rules, Did not follow constraints, Task not triggered correctly, Task not executed correctly, Other. Choose the most suitable reason—this ties directly to the subsequent Improve Answer operation. After selecting, click “Improve Answer” to view suggestions.

-

Improve Answer: Different reasons generate different improvement suggestions. You can also click “Create or Update AI Task” or “Create or Update Guidance” to jump to the AI Tasks or Guidance feature pages for further configuration to enhance the Agent’s answer.

Add Questions Within a Test Set

If you want to add new questions to the current test set, click “Add Question” and continue to add via direct input or file upload.

Filter Focus Questions and Answers

Filter: Customize the test set list display with different conditions. You can filter by Answer Status, Answer Score, Reason for Score, either singly or in combination.

Add More Test Sets

-

To create or switch test sets, expand the left test set panel to switch, view, or manage.

-

Click the “+” button to create a new test set via direct input or file upload. You can also manage existing test sets, including rename, delete, and export test set reports.

Export Test Sets

Export test set report

In the left test set list panel, choose “…” → “Export Report”, or click “Export” at the top of the list to export the current test set report. The report is added to the downloads queue and exported as CSV.

FAQ

- How can I add questions in bulk? Use “Add Directly” to paste line-separated questions, or “Upload File” to import in bulk.

- What file formats are supported for upload? Supported formats are .xls, .xlsx, .xlsm, .csv.

- Can I edit multiple test items at once? Yes. You can bulk-update answers for selected items or bulk-delete selected items.